If we generalize the notation for a minute to Hypothesis and Evidence, we can write the standard form of Bayes rule as:

P(H|E) = P(E|H) P(H) / P(E)An easy way to remember this is to think of the H terms on the right-hand side cancelling, though of course they do not actually do so.

Look at the right-hand side. The first term

P(E|H) is computed as follows: under our model that the cookie jar in question is jar A and jar A contained equal numbers of the two cookie types, then the probability of obtaining either type is easily calculated as 1/2. This is also called the likelihood (of H, in connection with this observation or evidence).The second term

P(H) is the probability that the cookie jar is jar A, given our current state of knowledge. Before the first draw, we might (I would at least) assume that P(A) = P(B), so this term is also 1/2. This is called the prior.The third term, in the denominator, is

P(E). This term is called the marginal probability or sometimes, the evidence. It looks simple but is actually compound: it is P(E) under all hypotheses. That is:P(E) = P(E|H) P(H) + P(E|~H) P(~H)where

~H is read as not H (the hypothesis is not true). Or, in cookie-land:P(A) = P(CC|A) P(A) + P(CC|B) P(B)The left-hand term is, of course, the probability we seek. It is called the posterior probability, the probability of H (here, A) given the evidence observed.

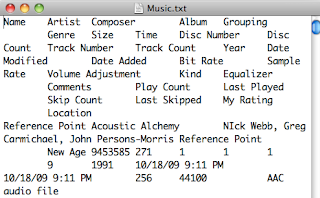

Calculating the posterior is a simple matter, as you can see from the slide above. It is reassuring to get the same answer that we arrived at earlier by a simple, intuitive process. Are you underwhelmed?

The power of Bayes rule lies in its repeated application. Suppose we replace the cookie in the jar (you've eaten it?---well, go get another one from the package my sister sent). Mix well, then draw again. It's another chocolate chip cookie. Our lucky day!

We have to update two values in the equation before we can do the calculation. We do not need to change the likelihood (because we replaced the cookie that was drawn.

We update the prior by setting it equal to 2/3, the posterior that was calculated in the first step. Knowing that we got CC the first time, our prior is now 2/3. We must also update the marginal probability, because some of its underlying parameters have changed. It's like this:

Bayesian analysis:

• is about updating expectations or "beliefs" based on evidence

• requires an estimate of prior probability for the hypothesis

(although we may be deliberately agnostic)

Next time: dice

UPDATE:

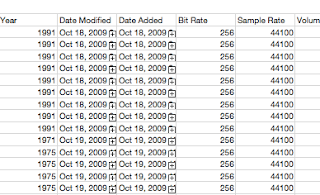

It is worth considering the case where the second draw is O rather than CC. Now we have:

P(A|O) = P(O|A) P(A) / P(O)

P(O|A) = 1 - P(CC|A) = 1/2

P(A) = 2/3 (unchanged from before)P(O) = 1 - P(CC) = 7/12And thus we calculate: 1/2 * 2/3 divided by 7/12 = 4/7.

In contrast to this example, we do not return to the naive initial probability of 1/2. Instead, having seen one of each type, we favor A by 1 part in 7 over B. The reason for this is that there are more O cookies overall. Therefore, the second observation of O does not give as much information as the first observation of CC because

P(E) is higher for O. We are more impressed by having drawn the CC cookie, and so we favor A.The observation that the sun rose today in the east is not very helpful in deciding any hypothesis, even one about the sun, because it always rises in the east.